GeoAI-Powered Platforms

Transforming Situational Awareness with Data Fusion

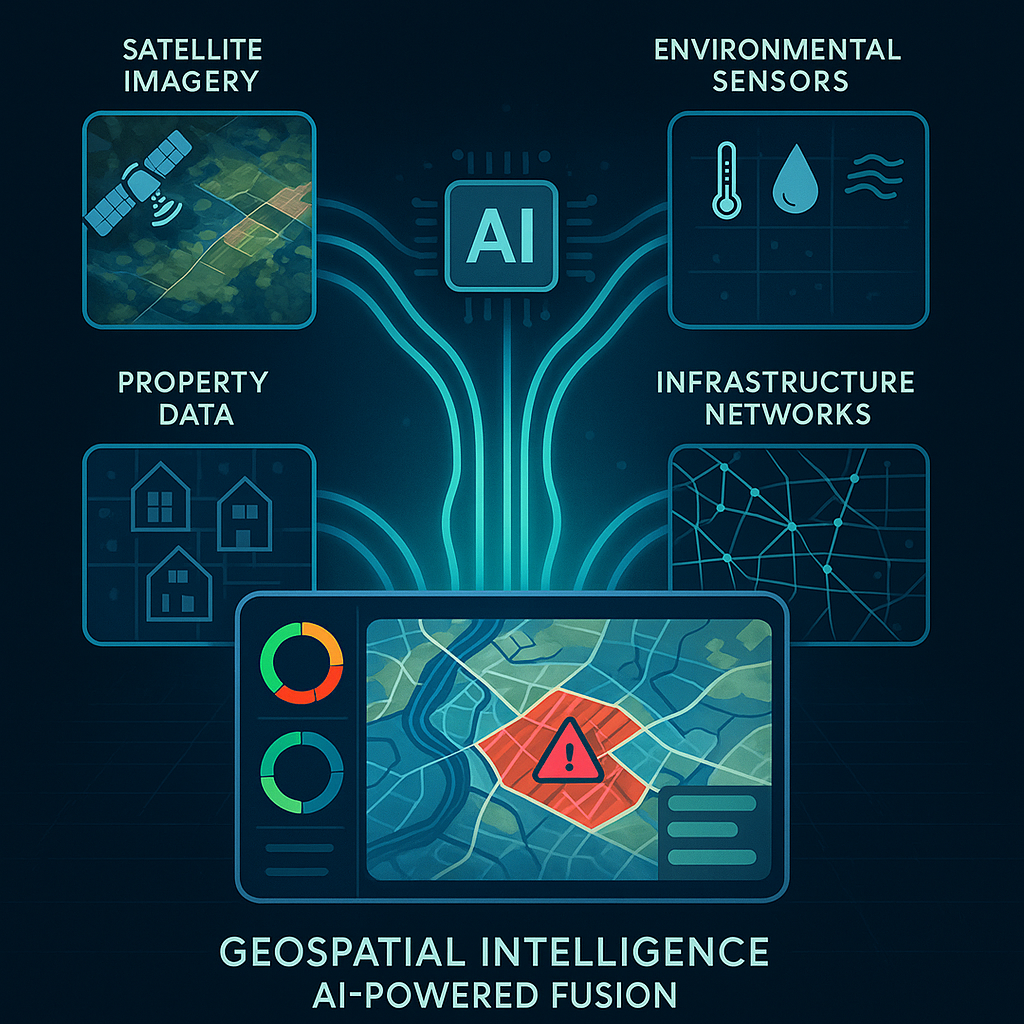

Neural Earth is revolutionizing GeoAI-driven data fusion to enable scalable situational awareness, allowing organizations to transform fragmented environmental, asset, and infrastructure data into unified, actionable intelligence for critical decision-making.

The Data Fragmentation Challenge

Why Traditional Situational Awareness Falls Short

Today's geospatial and risk professionals face an unprecedented challenge: critical decision-making requires synthesizing information from disparate and disconnected sources - satellite imagery, property records, environmental sensors, infrastructure databases, market feeds, and natural hazard models - each with its own format, update cadence, and quality standards. This data fragmentation creates blind spots that traditional situational awareness tools simply cannot overcome. There have been attempts to coin phrases about these issues, but I continue to call it "blind spot monitoring." When underwriters assess portfolio exposure or climate risk teams evaluate resilience strategies, they're often forced to manually reconcile conflicting datasets, work from static snapshots that are outdated before analysis begins, and make high-stakes decisions without full spatial context.

The consequences of fragmented data ecosystems are measurable and costly. Property-level risk assessments lack the environmental and infrastructure context needed to quantify cascading exposures. Portfolio managers struggle to maintain data fidelity across thousands of assets when information lives in siloed spreadsheets and legacy GIS platforms. Climate and hazards volatility accelerate faster than quarterly reports can capture, leaving organizations reactive rather than proactive. Traditional tools that rely on periodic batch processing and manual data integration cannot deliver the speed, transparency, or spatial intelligence that modern risk management demands.

What's needed is a fundamental shift from data collection to data fusion - transforming disparate signals into unified intelligence that reveals not just what is happening, but why it matters and what action to take. Humans, and many other species, have evolved for this type of pattern recognition. It is time our technologies do to. This requires moving beyond static reports that are outdated as soon as they are made, and siloed analytics, toward platforms engineered for continuous monitoring, pattern recognition, cross-domain integration, and explainable insights that connect environmental conditions, asset characteristics, infrastructure dependencies, and temporal trends into a single, actionable lens.

How GeoAI-Powered Data Fusion Creates Unified Intelligence from Disparate Sources

Artificial intelligence has evolved from a data processing tool into an orchestration engine capable of fusing environmental, property, infrastructure, and market data into coherent, decision-ready intelligence. Modern geospatial AI platforms apply machine learning to automatically ingest heterogeneous data streams - satellite imagery, weather feeds, building footprints, elevation models, parcel records, and real-time sensor networks - then normalize, align, and contextualize them within a unified spatial framework. This fusion process doesn't simply stack layers; it identifies relationships, detects anomalies, and synthesizes cross-domain signals that would remain invisible in isolated datasets.

At the technical core of AI-powered data fusion is the ability to reconcile temporal mismatches, spatial scale differences, and quality variations across sources. Neural networks trained on vast geospatial archives can infer missing attributes, validate data provenance, and generate confidence scores that surface data fidelity concerns before they compromise analysis. When a property record lacks roof condition data, AI models trained on millions of rooftop images can predict the material type and structural integrity with quantified uncertainty. When environmental sensor coverage is sparse, machine learning interpolates risk gradients using terrain, proximity networks, and historical patterns - always maintaining transparency about model assumptions and limitations.

The result is a living, breathing intelligence layer that updates as new data arrives, continuously refining risk assessments and surfacing emerging patterns. For insurers, this means total insurable value estimates that incorporate real-time construction activity and environmental changes. For asset managers, it delivers portfolio views that connect individual property vulnerabilities to broader infrastructure dependencies and market dynamics. For climate risk analysts, it enables hazard monitoring that tracks not just event occurrence but propagation pathways through built and natural systems. This unified intelligence transforms situational awareness from a static snapshot into a dynamic understanding of interconnected risk.

Near-Real-Time Risk Monitoring

From Static Reports to Continuous Signal Generation

The shift from periodic reporting to near-real-time monitoring represents a paradigm change in how organizations understand and respond to risk. Traditional workflows rely on quarterly assessments, annual updates, and event-triggered analysis, an approach fundamentally misaligned with the velocity of climate-driven hazards, infrastructure changes, and market shifts. Near-real-time platforms ingest streaming data from satellites, weather networks, and IoT sensors, applying AI algorithms that instantly detect anomalies, quantify deviations from baseline, and generate alerts when risk thresholds are crossed. This continuous signal generation enables proactive decision-making rather than reactive damage assessment.

Implementing near-real-time risk monitoring at scale requires solving both technical and operational challenges. On the technical side, platforms must be engineered for speed, capable of processing terabytes of geospatial data, running complex spatial models, and delivering instant query responses across portfolios containing thousands of properties. On the operational side, organizations need workflows that translate continuous signals into actionable intelligence without overwhelming analysts with false positives or noise. This is where frameworks like RiskRank, and various other Neural Earth indices demonstrate their value. By normalizing diverse risk factors into cross-comparable 1-10 scores that continuously update, teams can prioritize attention, track temporal trends through RiskTime, and model cascading scenarios through a RiskGraph without requiring deep technical expertise in every underlying data source.

The business impact of near-real-time monitoring extends across the risk management lifecycle. Underwriters gain the ability to price risk based on current conditions rather than historical averages, incorporating wildfire perimeters, flood gauge readings, and storm tracks as they evolve. Portfolio managers can monitor concentration risk dynamically and receive alerts when multiple assets face correlated exposures to emerging hazards. Emergency response teams shift from post-event damage assessment to pre-event preparation, using predictive signals to stage resources and activate continuity plans. By collapsing the latency between event occurrence and decision response, near-real-time platforms fundamentally change what's possible in risk mitigation and resilience planning.

Building Explainable Situational Awareness

The Science Behind Actionable Risk Insights

Actionable intelligence demands more than accurate predictions. It requires transparency about how conclusions are reached, what data drives assessments, and where uncertainty exists. Explainable AI addresses the 'black box' problem that has historically limited the adoption of machine learning in high-stakes decision-making. When a platform assigns a value to a property or portfolio, stakeholders need to understand the contributing factors, such as:

-

Is elevated risk driven by wildfire proximity, roof condition, infrastructure vulnerability, or a combination?

-

What temporal trends are influencing the assessment?

-

Which data sources carry the most weight, and where do confidence intervals widen?

Building explainable situational awareness requires architecting transparency into every layer of the analytical stack. At the data layer, provenance tracking maintains full lineage from raw sensor readings through derived features, enabling users to audit inputs and validate quality. At the model layer, techniques like attention mechanisms surface which features drive predictions, translating complex neural network outputs into human-interpretable explanations. At the presentation layer, interactive visualizations let users drill from summary scores into underlying evidence - viewing satellite imagery that informed roof condition estimates, exploring the hazard models that quantified wildfire exposure, or examining the infrastructure networks that reveal cascading dependencies.

The science behind explainable risk insights also extends to communicating uncertainty honestly and usefully. Confidence scores on roof analytics indicate where manual verification may be warranted. Temporal tracking reveals when data staleness may affect accuracy. Scenario modeling exposes sensitivity to key assumptions, helping decision-makers understand how conclusions might shift under different conditions. This commitment to transparency builds trust, enables regulatory compliance, supports audit requirements, and most importantly, empowers users to combine AI-generated intelligence with domain expertise and contextual knowledge. Explainability transforms AI from an opaque oracle into a collaborative partner in decision-making.

Scaling Geospatial Intelligence Across Teams and Portfolios with Modern Platforms

As organizations expand their use of geospatial AI from pilot projects to enterprise-wide operations, scalability becomes paramount - not just in technical capacity but in organizational adoption. Modern platforms address this through flexible architectures that support everything from individual analysts working on single-property due diligence to enterprise teams managing portfolios of tens of thousands of assets across multiple geographies. Role-based access controls ensure underwriters, risk managers, portfolio analysts, and executives each see views tailored to their responsibilities. Batch upload capabilities enable teams to ingest entire portfolios at once, while portfolio management features organize assets by region, asset class, or custom criteria that align with business structure.

Scaling intelligence also means democratizing access to sophisticated geospatial analysis without requiring every user to become a GIS expert. Natural-language AI assistants enable conversational interaction—users can ask questions in plain English about portfolio exposure, request comparative analyses across properties, or explore 'what-if' scenarios without writing code or mastering complex interfaces. Pre-built reporting workflows generate standardized outputs for underwriting packages, board presentations, or regulatory filings, ensuring consistency while allowing customization. Data catalogs provide self-service access to environmental layers, hazard models, and property attributes, accelerating time-to-insight and reducing bottlenecks on specialized technical staff.

From an infrastructure perspective, platform scalability depends on cloud-native architectures that handle compute-intensive spatial operations, massive data volumes, and concurrent user loads without performance degradation. But equally important is business model scalability, subscription tiers that align cost with usage, starting with accessible entry points for smaller teams and scaling to enterprise agreements with custom integrations, API access, and dedicated support. This flexible approach lets organizations start with focused use cases, demonstrate value, and expand systematically across departments and workflows. When geospatial intelligence platforms are designed for scalability at every level, such as technical, operational, and commercial, they become foundational infrastructure for risk-aware decision-making rather than specialized tools confined to technical experts.